|

The above function defines the scatter plot for the feature values and their corresponding targets.

x holds all the feature values and y holds all the target values.

Now, we have 1 feature and 200 sample values in total. import numpy as npįrom sklearn.datasets import make_regression x, y = make_regression(n_samples=200, n_features=1, noise=20, bias=0) Let’s get our data from Scikit-Learn first. Using Scikit-Learn Make RegressionĪs this post is meant to be an introductory one, so we will be using Scikit-Learn’s make_regression module to generate random regression data. So, fire up your favorite IDE or Jupyter Notebook and follow along. For that, we have to optimize the parameters \(\beta_0\) and \(\beta_1\) using a cost function (also known as objective function). So, we will have to build a linear model by using the features x and target y that should be a straight line plot on a graph.

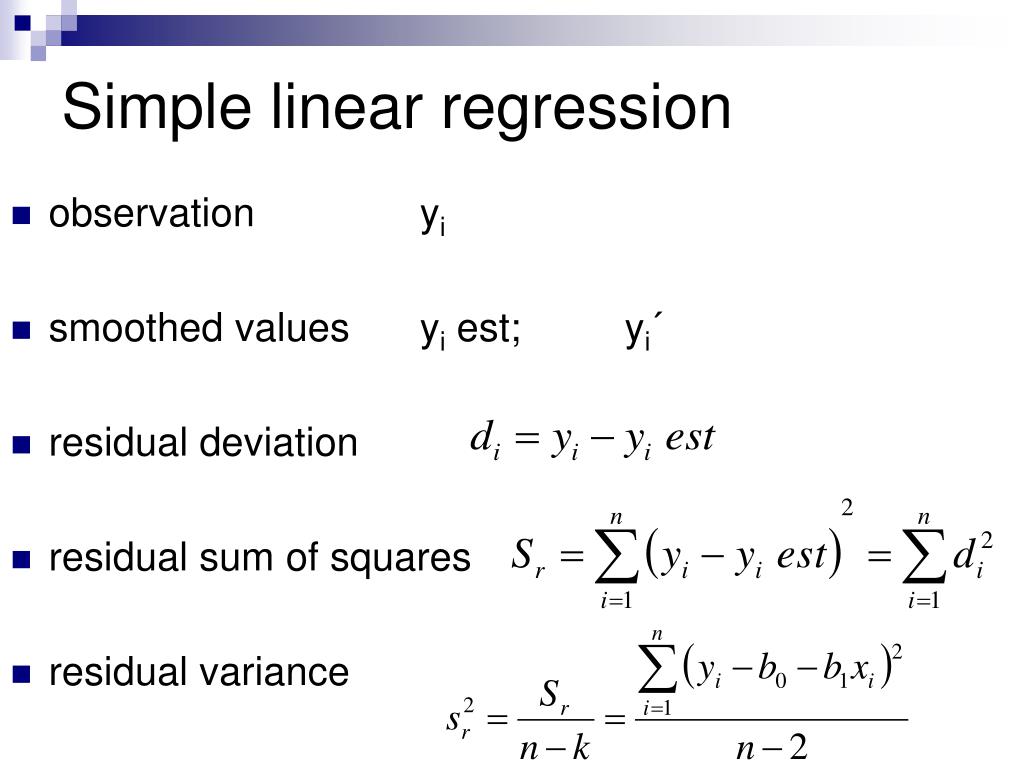

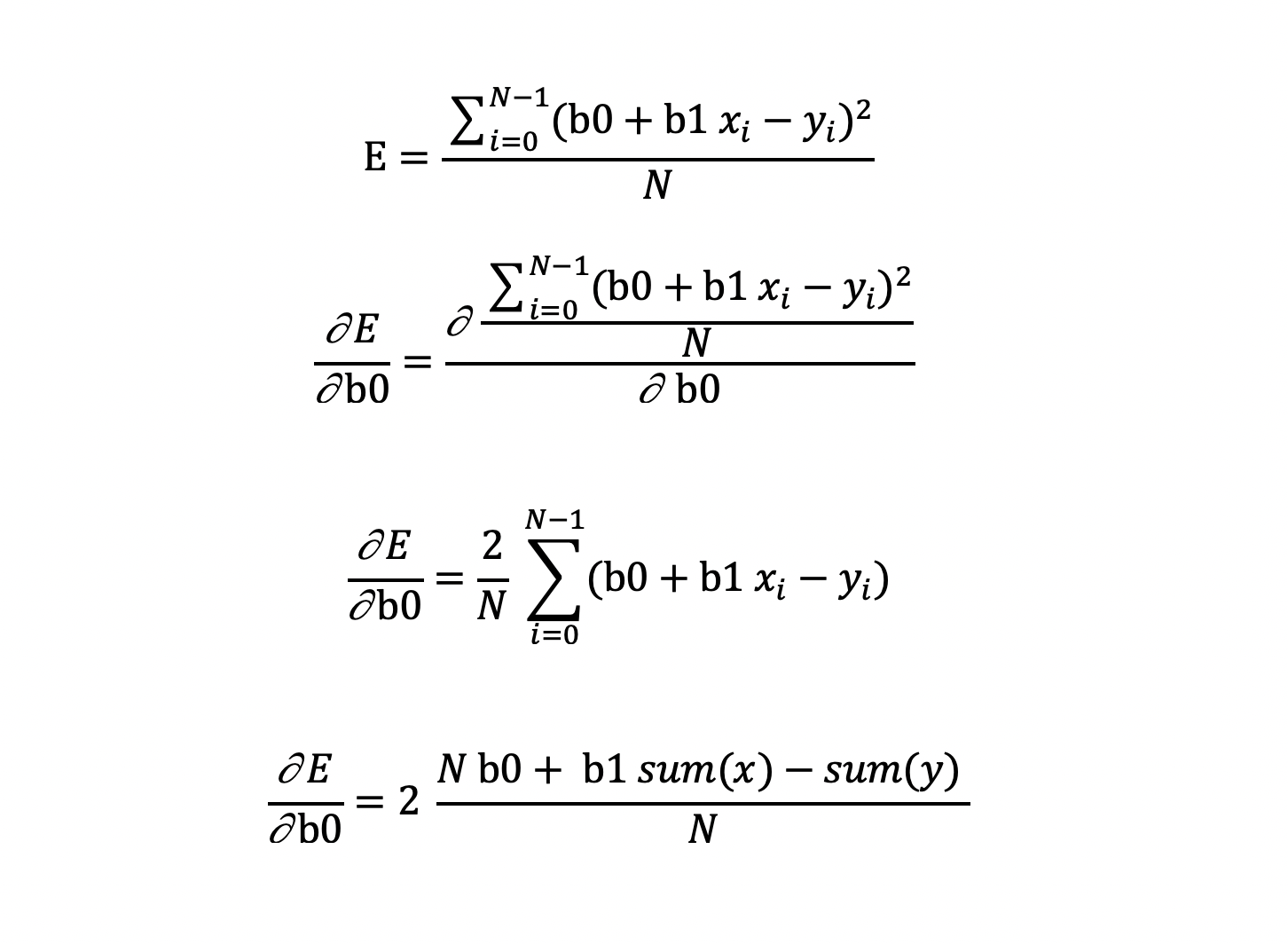

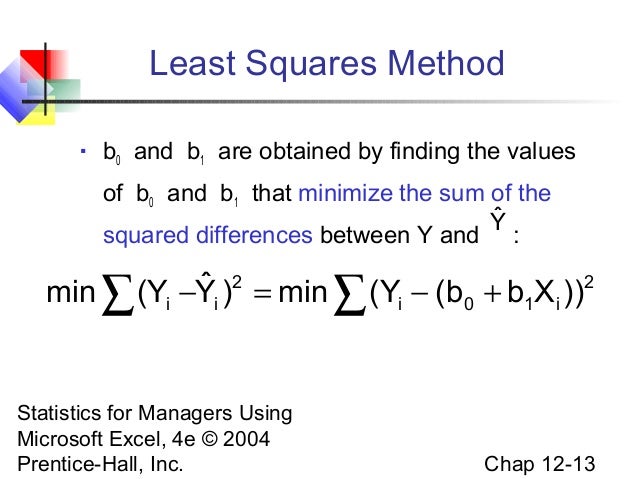

Where \(\beta_0\) is the intercept parameter and \(\beta_1\) is the slope parameter. Our job is to find the value of a new y when we have the value of a new x. Let the feature variable be x and the output variable be y. In simple linear regression, we have only one feature variable and one target variable. The Linear Regression Equationīefore diving into the coding details, first, let’s know a bit more about simple linear regression. It will teach you all the basics, including the mathematics behind linear regression, and how it is actually used in machine learning. Note: If you want to get a bit more familiarity with Linear Regression, then you can go through this article first. In this article, we will be implementing Simple Linear Regression from Scratch using Python. Also, it is quite easy for beginners in machine learning to get a grasp on the linear regression learning technique.

Linear Regression is one of the oldest statistical learning methods that is still used in Machine Learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed